Understanding Property Record Data Standards: PRIA, MISMO & Why They Matter

Emotion detection is important for deep learning and AI research. Models perform best when they can recognize and contextualise human emotions. Deployment of this technology gives access to insights into customers that helps improve products and services.

Table of Contents

Many high-stakes industries like healthcare, security or automotive are using emotion detection in a big way for their models. Despite this, models often fail in real-world scenarios. The reason being that faces are often not clearly visible; they are at angles, covered or with masks. Sometimes low lighting also adds to inaccurate capture of emotions. It is not about model problems but more because of annotation quality.

Industries claiming to use the best models, advanced architecture, CNNs and transformers still fail due to a lack of accurate training data. Annotation is the pillar of high model performance. Bias and misclassification due to poor annotation hits model performance.

It often happens that emotion recognition models appear accurate in controlled labs and even achieve 65-70% accuracy on benchmark datasets such as FER2013 but the performance drops drastically in real world scenarios. The performance gap can be improved with better training data.

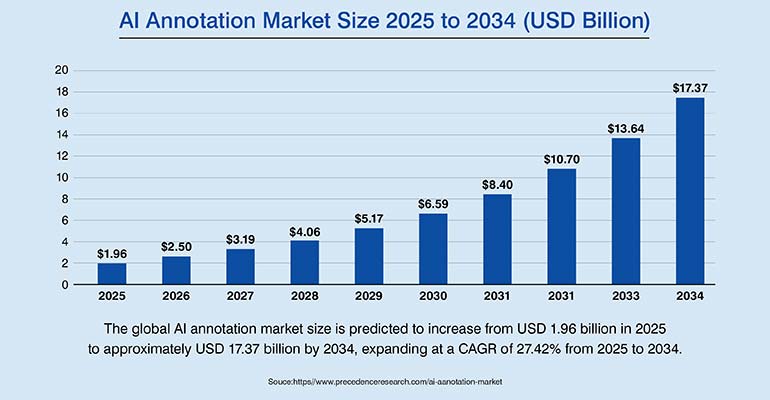

The global affective computing market size was estimated at USD 62.53 billion in 2023 and is projected to reach USD 388.28 billion by 2030, growing at a CAGR of 30.6% from 2024 to 2030. The high investment and unreliable models, the gap is concerning.

There is an urgent need for annotation accuracy. The models don’t need high volumes of data, but better labelled data. And emotion data is most challenging to annotate as it involves subjective interpretation of expressions, depends on contexts and micro expressions.

Here in this blog, we cover annotation techniques that train emotion models and technically sound workflows.

Struggling with inconsistent emotion detection accuracy?

When annotating images for objects such as cars, pedestrians, or traffic lights, most annotators can easily agree on what is present in the image. But emotion datasets are tricky; they are context dependent and open to interpretations. This often gets subjective and without a structured labelling framework annotators can’t reach consensus. What you need here are quality control mechanisms with consensus labelling and guidelines.

Developed by Paul Ekman, Ekman taxonomy classifies human emotions based on facial expressions. These are built on 7-emotion taxonomy. Paul Ekman’s emotions include anger, fear, disgust, happiness, sadness, surprise, and contempt.

This has become industry standard for labelling facial emotions and major datasets such as FER2013 (≈35,000 images) and AffectNet (1M+ images) use these emotion classes to train emotion recognition models.

But these human emotions may not always appear pure and isolated; they often get blended. Sometimes you see emotions that is a combination of multiple emotions such as fear, surprise or even happy. This adds to the confusion, making labelling difficult.

A single emotion is assigned to each image, which speeds up dataset creation, but it struggles to capture the complex human expressions. The training datasets starts looking at emotions as a single category, which can often mislead.

This creates issues because in real world situations simple emotions don’t work. Human emotions are often mixed and complex. This is a challenge in emotion detection systems. Here Facial Action Coding System (FACS) developed by Ekman and Friesen helps. Expressions are broken and identified into 46 distinct facial action units, each linked to a specific muscle movement. This supports correct emotion identification.

This also has its own challenges. Your annotators must be FACS-certified, and such talent is not easily available. FACS-based annotation is slower, more complex, and more expensive than standard emotion labeling.

Different cultures interpret emotions in different ways as people react differently to each situation. To address this the annotation team must be from different cultural backgrounds so that any kind of bias can be avoided. Cultural and geographical diversity among annotators results in balanced emotion labels.

The annotation method used in a dataset directly shapes what an AI model can learn from it. You need to select the technique best suited to your model’s needs. Some models may rely on facial geometry; some may need pixel-level detail, while for some, body posture and gestures provide important emotional cues. Some applications also require compound emotion labels, where multiple emotional states may appear together. The technique you choose must match the model’s objective, or else the dataset may fail to teach the model the behavior it needs to learn.

Here are the different image annotation techniques that can help you in accurate emotion detection.

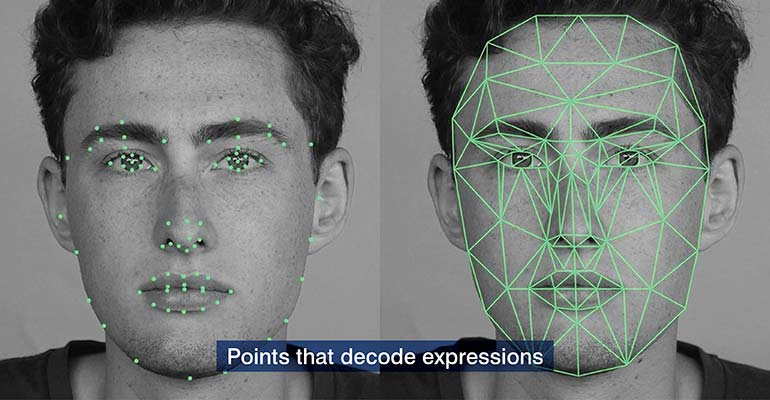

This technique captures the geometry of the face by marking specific points on facial locations. Usually, 68 landmark points, which is a dlib standard, is used to map the facial structure. For more detailed tracking, higher density schemas with 98 points are also used.

Here AI models can measure how facial features move during expressions and correspond closely to Facial Action Units (FACS). This makes landmark annotation effective in capturing emotions without FACS.

This technique struggles when facial features are partially hidden and when landmark points are blocked, the model may lose the geometric structure needed for reliable analysis. In such cases polygon or segmentation annotation can be used to capture the visible regions of the face.

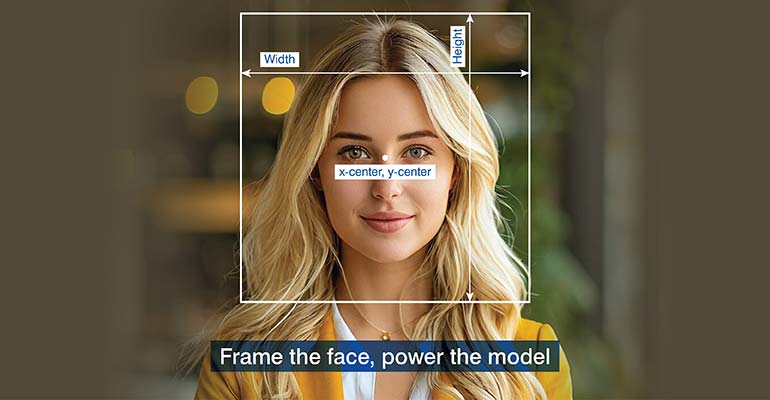

Bounding boxes annotation draw rectangular boxes around faces, giving clarity to the area that model must focus on. This helps AI systems locate faces within an image. Many face detection models such as MTCNN, RetinaFace etc. use these labelled boxes to identify faces.

But again, accuracy is very important here. The box should be perfect; a little loose or too tight may misguide the model. Loose box may include elements not needed, while a tight box can crop things. A perfect box must focus only on the face. Any inaccuracy here may continue in the later stages such as detection, expression classification, and action unit analysis.

Polygon and instance segmentation support AI-based emotion detection by isolating facial regions, which helps them capture expression-relevant areas more accurately than simple bounding boxes.

Polygons, unlike bounding boxes, outline the face following boundaries around lips, eyebrows and eyes. These areas express emotions and they need to be captured and analysed. In crowded areas where faces are often partially covered or angled. Here polygons help by outlining the exact contours of the face.

And then instance segmentation separates each detected face as an independent object. This gives each face its own segmentation mask. This helps in the analysis of emotional expressions for multiple individuals simultaneously. Check image segmentation services for a better understanding of the process.

Traditional emotion recognition systems focus primarily on facial expressions which can provide ambiguous emotional signals. There are many other elements like surrounding people, posture or the context that defines the true emotion. So, just the facial expression cannot be a correct emotion.

Semantic segmentation assigns a label to every pixel in an image. It is not just about the face; the model identifies multiple scene elements simultaneously, like the human body, background objects, surrounding people in the scene, and other environmental features. It is a step beyond just face analysis and moves towards scene understanding.

The context is important as the cues help disambiguate similar facial expressions. The integration of scene semantics with emotional annotation helps infer context and combined emotions. AI can interpret emotional states by capturing signals beyond just facial expressions.

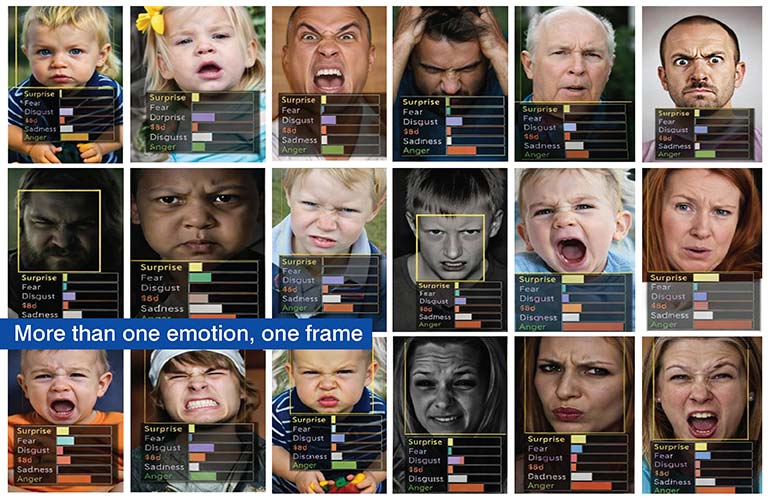

Here multiple labels are assigned to a single face, which helps annotators to interpret the single strongest emotion from the multiple ones.

It could be happiness plus surprise, or fear plus surprise or maybe sadness with anger. So, it is no longer about exclusive categories but more of overlapping emotional states.

This is necessary for naturalistic data because in such a scenario pure Ekman expressions occur rarely. Most frames capture transitional or mixed emotional states. Real world emotional complexity gets challenging when emotion detection models are trained on single-label datasets.

Disagreements between annotators, inconsistent tagging and rare emotions require advanced quality control mechanisms.

Table 1: Annotation Technique Comparison

| Annotation Type | Best For | Landmark Density | Occlusion Handling | Typical Accuracy |

|---|---|---|---|---|

| Keypoint / Landmark | Facial AU mapping, expression tracking | 68–98 points | Poor (>30° yaw fails) | 92–96% on FER2013 |

| Bounding Box | Face detection pre-processing | N/A | Moderate | IoU-dependent |

| Polygon / Instance Seg. | Occluded / multi-face scenes | Variable | Strong | 85–93% mIoU |

| Semantic Segmentation | DMS, full-body affect recognition | Pixel-level | Strong | 88–95% mIoU |

| Multi-Label | Compound expressions (naturalistic data) | N/A — class labels | N/A | Requires Kappa > 0.70 |

Inter-annotator agreement measurement (IAA) is important for a dataset to be operationally effective. A Cohen’s Kappa above 0.75 is more important for operational efficiency than a 500,000-image corpus with a low score. A model performs on the basis of the labels it is given. Any inaccuracy in the label will result in the model learning those mistakes instead of improving.

Cohen’s Kappa is a measure that decides the level of annotator agreement. Higher level is required in emotion labelling for better performance. Any score below 0.60 is unreliable, while 0.70 is considered good. For high stakes or clinical use 0.80 is desired. High agreement between annotators is needed for high-performing models.

The threshold is applied to each label and not to each image. For instance, if there is an image that is labelled as ‘fearful surprise’ we need to check agreement on both fear and surprise separately. So, we have two separate Kappa measurements.

There is a difference between consensus and a majority vote. Majority vote works faster but can often get biased while the consensus vote is slow but accurate. In case of a majority vote if 2 out of 3 annotators agree on a certain emotion, it becomes the final label. But this ignores minority opinions.

In case of weighted consensus, each annotator’s confidence is considered, which produces more accurate labels, especially for tricky cases.

Annotator drift is a common problem that needs to be fixed. It happens when over a time the label accuracy starts getting inconsistent. Repetitive work often leads to this kind of drift. This kind of drift needs to be fixed. You can insert gold-standard images that is the known correct labels. Once this is into the workflow, the annotators’ labels can be compared with the correct answers using kappa. It is important to run regular calibration checks and realign annotators.

Annotation inconsistency could limit your model’s performance.

Hitech BPO has a very streamlined workflow that they follow across industries, be it retail, automotive and other emotion detection projects. The structure followed is consistent across all industries, while the techniques, label schema change as per the project requirement.

Table 2: 6-Stage Annotation Pipeline with QA Checkpoints

Build high-precision datasets with structured annotation workflows.

Emotion detection is used across industries to capture human emotions and improve services. Hitech BPO has a set strategy for different sectors where they deploy their services. Here we present each application where we present our strategy, challenges and the techniques we use for each.

Cameras are used by retail stores to capture the sentiments of shoppers. It could be frustration during self-checkout, confusion or engagement with displays. Once these get captured the retail staff can be made available to them immediately to solve the issue. It helps identify customer behaviour that is used later to improve customer experiences.

This presents a challenge to the retail industry as people appear at distances or different angles, and often the faces are blocked by carts or shelves. Here the basic model fails as only the frontal face model may not work. Here one needs to use polygon segmentation to isolate the face. The expressions need to be captured accurately and for that 68-point landmarks are combined.

In one Hitech BPO retail project 1.2M+ fashion and décor images were annotated in just 12 days. The California based retail AI company saw a 96% boost in productivity while maintaining strong annotation precision at scale. Read the complete case study here.

EU regulations (ISO 15008, UN ECE R158) require driver monitoring systems in vehicles. These systems must detect drowsiness, signs of inattention, and any kind of distraction. Even the Euro NCAP sets a high bar where eye closure detection must be 95%+ accurate. And to meet these standards, one requires very high-quality training data.

DMS cameras use infrared and not normal RGB. It is important for the data to include head angles also and not just frontal views, as drivers look in many directions. This is managed by using 98-point landmarks, which supports precise eye tracking. Segmentation is used to separate driver from background while gaze direction labels as an extra layer.

It is important to spot unusual behaviour at airports and other similar facilities through emotion detection and facial recognition. It is to identify people showing reactions that don’t match their situation. Poor image quality is again a challenge here. Blurry images, faces blocked in crowds, matching faces from old footage are often a struggle.

Multiple viewers help get a clear label, avoiding vague tagging and ensuring consistency even with blurry or ambiguous faces. Guesswork is completely avoided and constant updates enable long-term, reliable identification.

Understanding patient feelings and emotions without relying on self-reports is important in mental health. Teletherapy and clinical tools use emotion AI to capture the right emotion, but they need to follow strict rules as data privacy is important.

They must capture micro expressions under 200ms and detect subtle or suppressed emotions and follow HIPAA rules with full de-identification before annotation begins. Clinical emotion AI demands ultra-precise labeling and strict data privacy compliance.

To capture very subtle emotions, annotation uses FACS coding at the AU (muscle movement) level by trained experts. It also adds valence, arousal, and dominance scores for deeper emotion analysis. Video is annotated frame-by-frame (at least 30 fps) to track how expressions change over time.

Emotion detection is one of the most legally sensitive areas in AI. Compliance is very important and must be followed strictly. And the responsibility must be shared with both the annotation vendor and the client. One needs to be alert from the beginning and many organizations realize only after any issue arises.

However much you improve your model by adding better algorithms, architecture etc. it will not help improve your model performance. The improvement will come only from better data. What you need is higher IAA, AU-level FACS coding and balanced datasets. Inconsistent and biased labels will never help your models perform.

Now, even the laws are making them mandatory for compliance. What was earlier considered good practice or internal checklist is now a legal requirement. Regulations (like EU AI Act, BIPA) now require data governance, consent records, and bias audit reports. These are no longer optional. You will need them for audits. It is better to prepare now to avoid future compliance issues.

What’s next? Message us a brief description of your project.

Our experts will review and get back to you within one business day with free consultation for successful implementation.

Disclaimer:

HitechDigital Solutions LLP and Hitech BPO will never ask for money or commission to offer jobs or projects. In the event you are contacted by any person with job offer in our companies, please reach out to us at info@hitechbpo.com